Scaling Moderation Transparency at Fanvue

Building an account health and user warnings system for creators, compliance, and trust at scale.

Fanvue is a rapidly growing creator economy platform headquartered in London, enabling paid exclusive content distribution and innovation in AI-powered creator tools. In early 2026, the company announced a $22M Series A and a $100M+ annualised run rate, signaling strong market traction and increasing complexity in content moderation and compliance.

What we set out to do.

- G1Align creator-facing UX with internal moderation workflows

- G2Reduce reliance on email-only communication

- G3Enable creators to self-serve before contacting support

- G4Support cumulative warning logic (e.g. 3 warnings = ban)

- G5Create a scalable system that could adapt to future legal changes

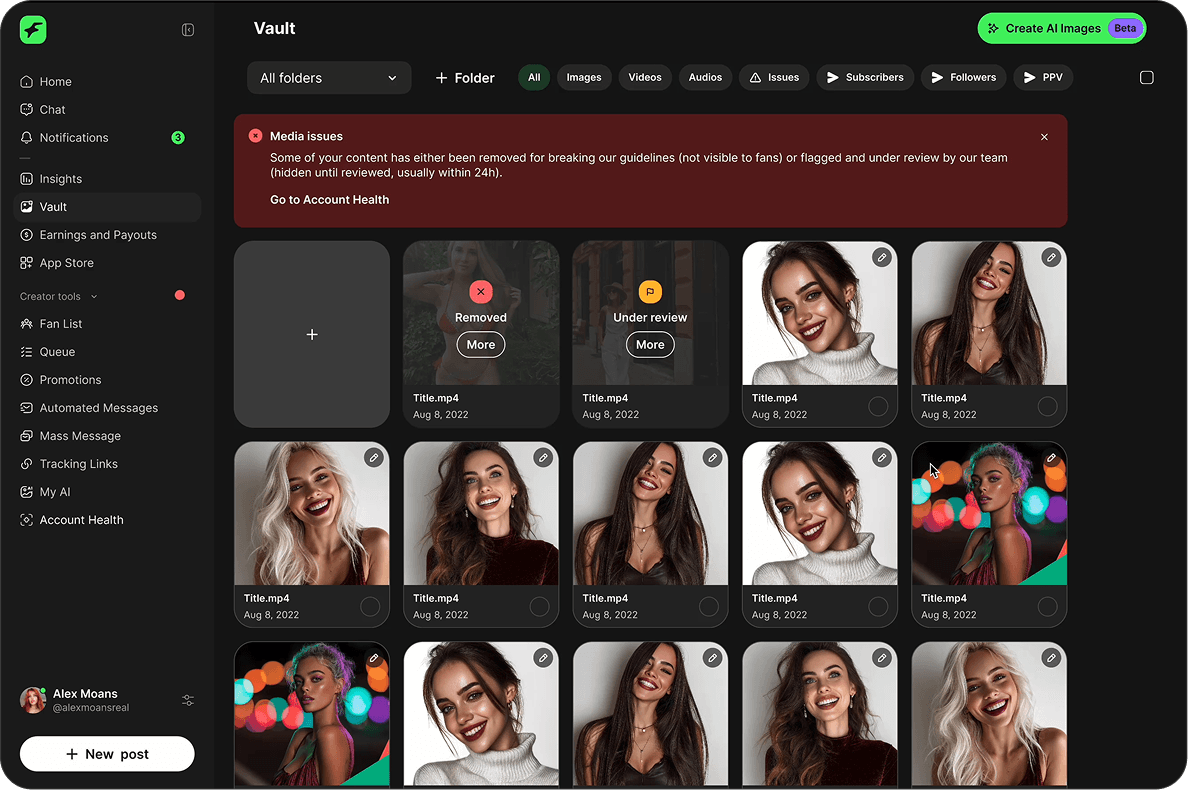

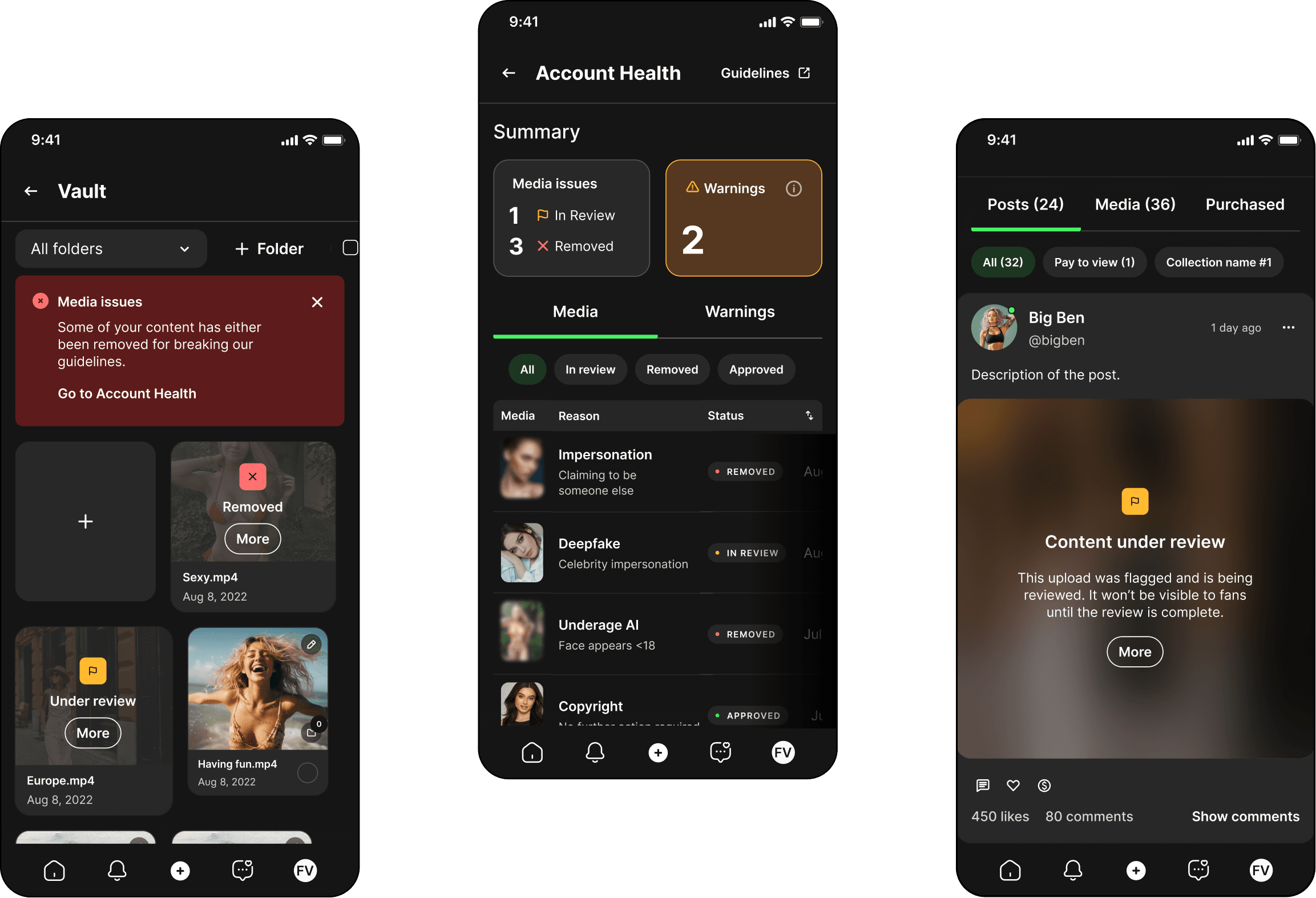

What shipped.

- Centralized Account Health experience for creators

- User Warnings system replacing the previous strike logic

- Warning types, severity, account status, and escalation risk clearly communicated

- Moderation team productivity increased with a unified internal toolset

- Spontaneous positive creator feedback across Discord and community channels

Role & Ownership.

- Problem framing and system definition

- UX strategy for Account Health and Warnings

- Translation of compliance policy into product UX

- Alignment across Product, Trust & Safety, Support, and Engineering

- Core information architecture and interaction models

Why did we build this?

Creators were informed of violations by receiving a “Strike”, and only via email. This led to:

- Missed or disputed warnings

- Repeated violations

- High support load (“I didn't see the email”)

- Heavy enforcement (login locks, manual follow-ups, etc)

The platform needed a moderation system that could:

- Clearly communicate violations to creators

- Support cumulative enforcement rules

- Unified source of truth for moderation, support and creator facing UIs

- 20+ violation types handled by a single strike model

- No clear escalation rules or ban thresholds

- No "in review" state — content was removed without context

- No proportional response to violation severity

- No educational feedback to help creators understand the rules

- What a Strike for a violation meant

- Whether a Strike was final before a ban

- What risk they were approaching

- Consistent tracking

- Clear user-facing representations of enforcement

- Had to constantly ask Moderators when creators reached out complaining about strikes

A shared source of truth.

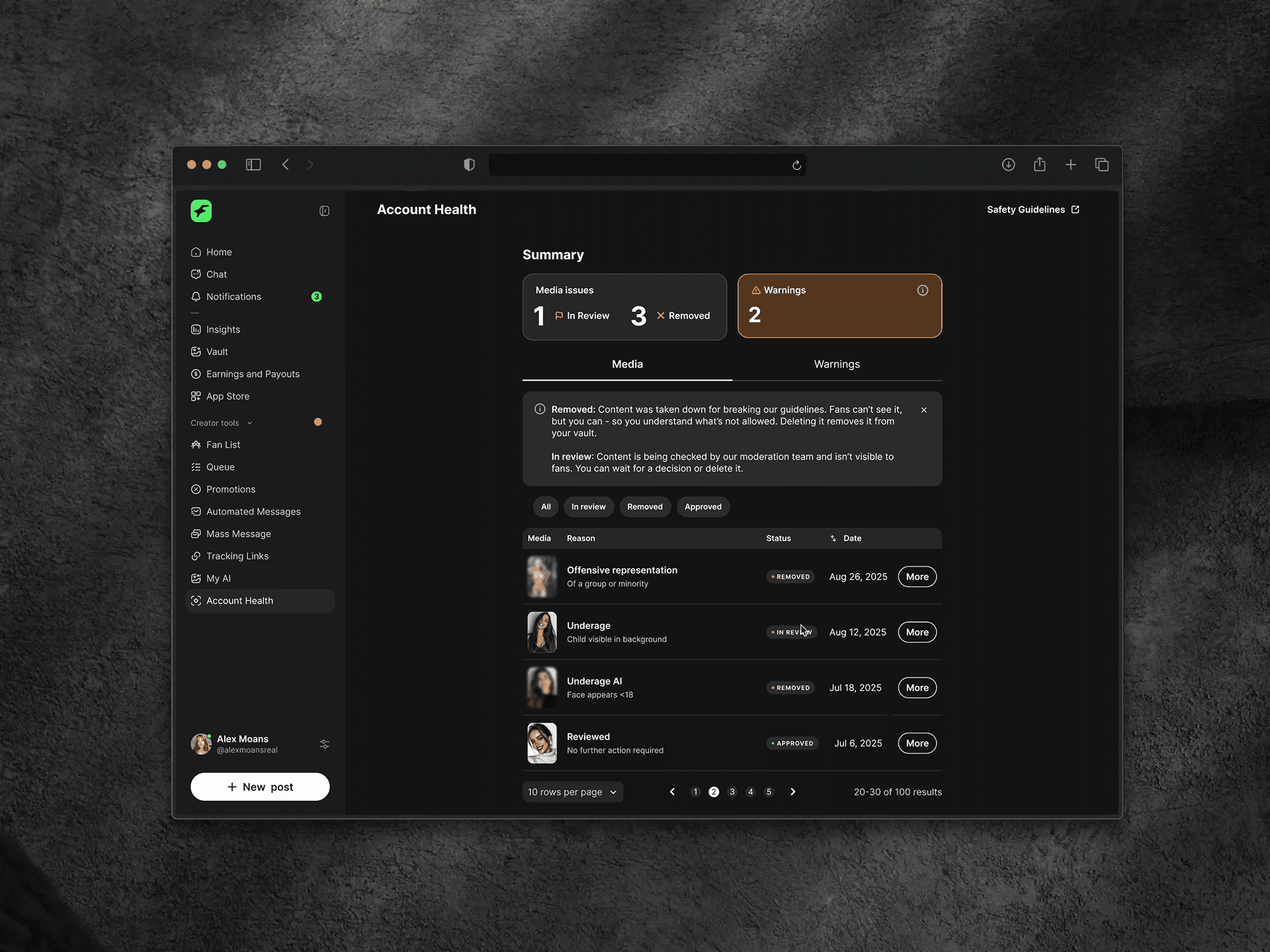

- Creator-facing Account Health shows overall account status, active warnings and severity, escalation risk, and a clear explanation of why action was taken.

- User Warnings surface the date issued, violation type, severity, whether it is a final warning, and account risk status.

- Clear status communication at every stage: In review, Removed, Approved.

- Creators can self-delete removed content directly from their vault.

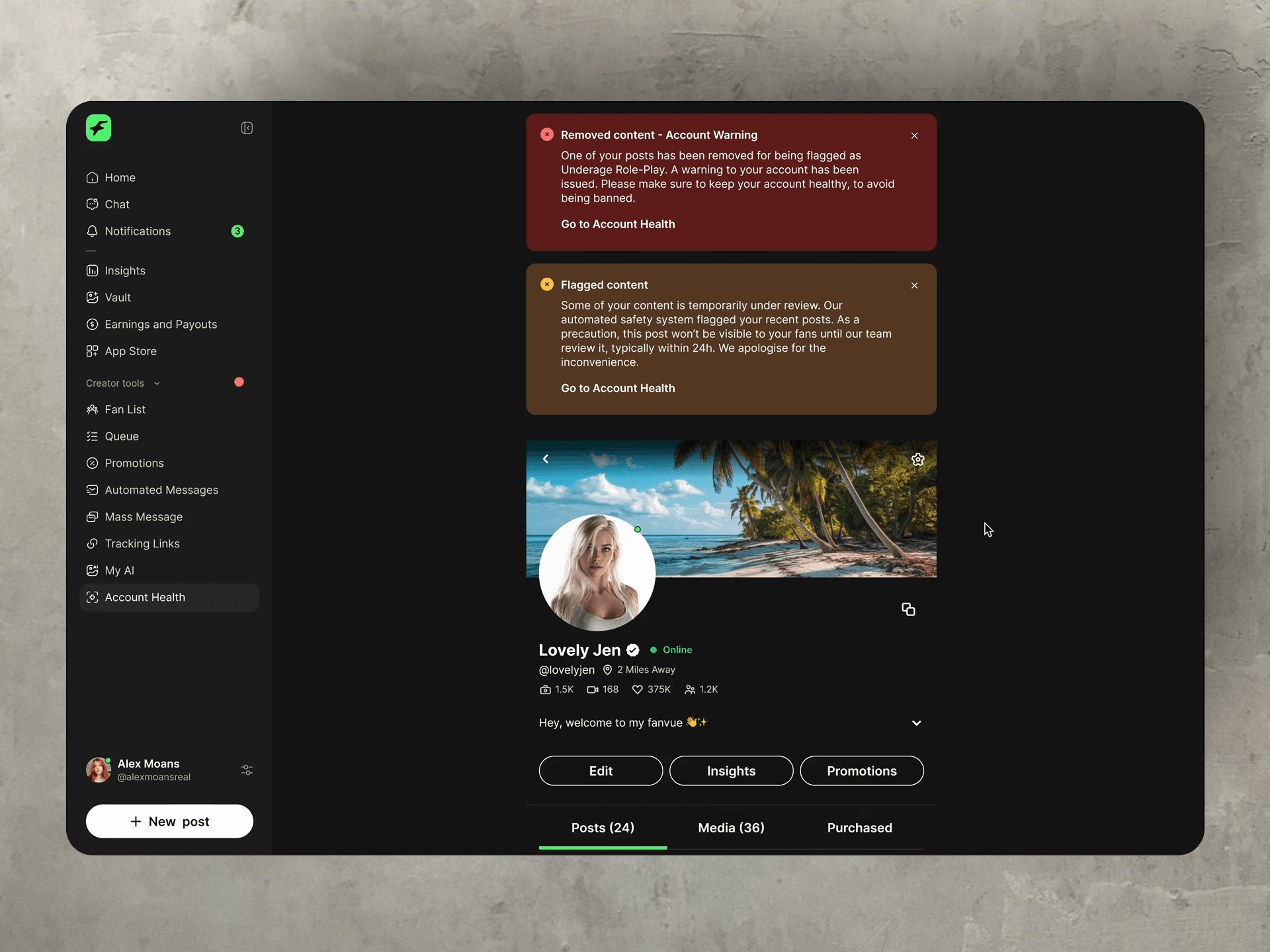

- Homepage banner

- Notifications

- Profile page

- Content Vault

- Emails

- Per-violation type templates

- Clear CTAs linking to Account Health

- Links to guidelines for transparency

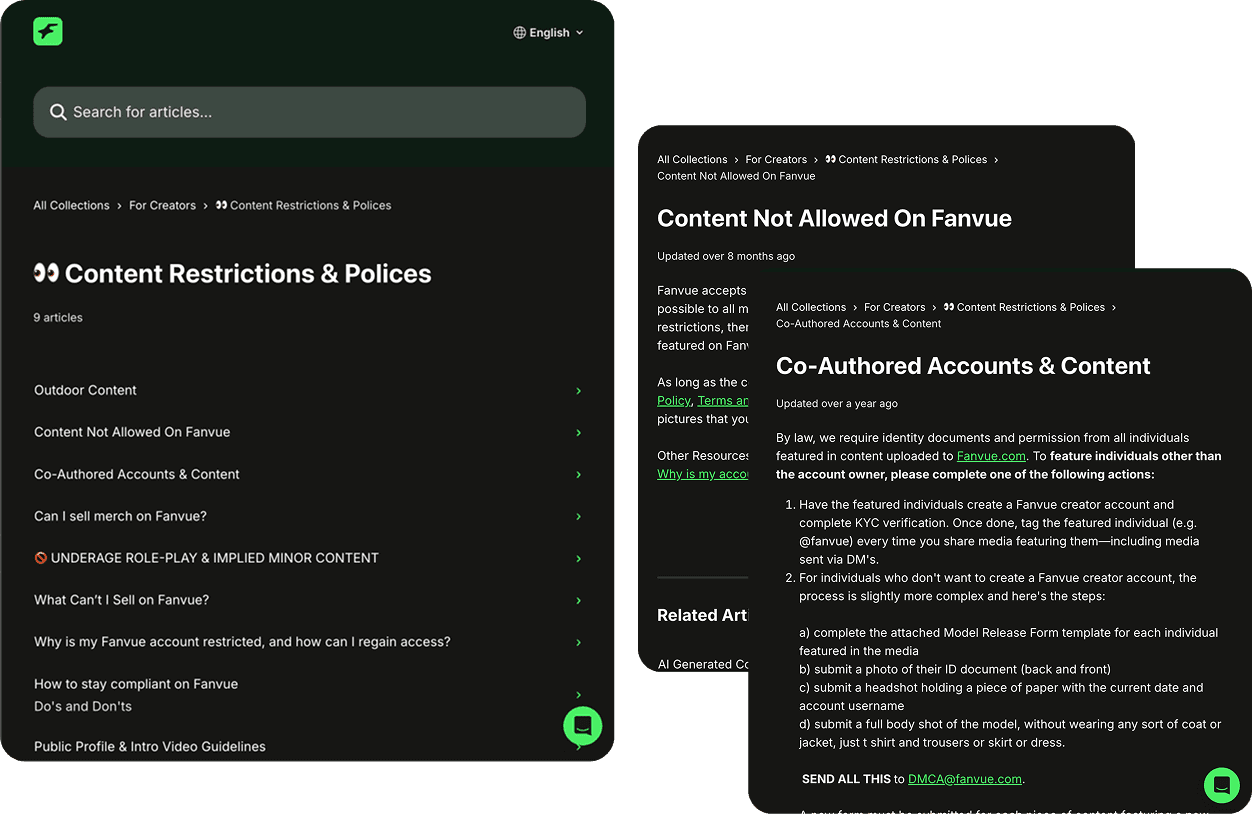

- Clearer guide for content restrictions

- Violation-type-specific pages

From punishment to prevention.

Policy guidance is embedded contextually throughout the experience, explaining:

- Why content was flagged

- What rule applies

- How to avoid future violations

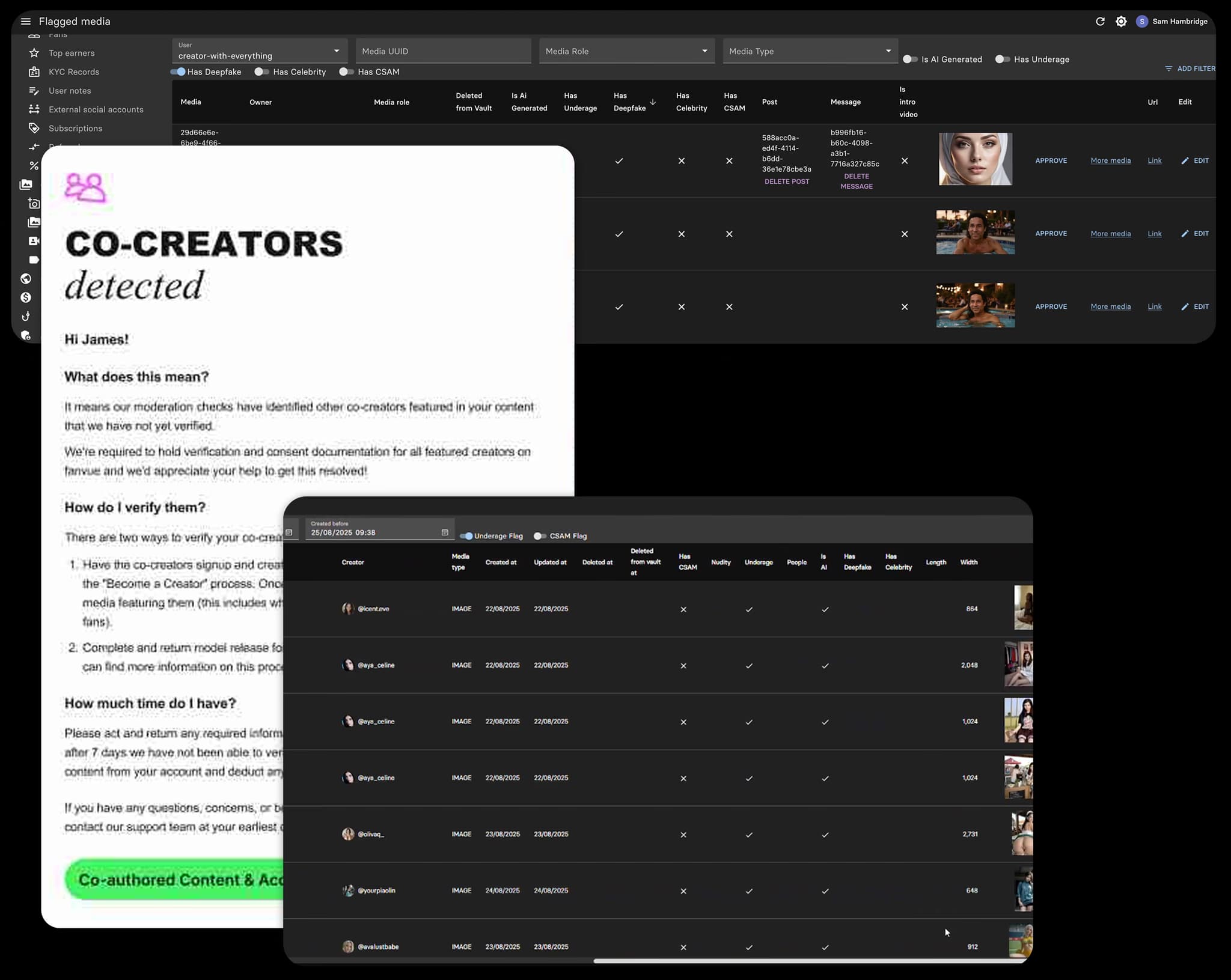

How moderation, support, and compliance got aligned.

Moderation actions, internal notes, and creator communications lived in separate systems. Support was the translator between all of them.

Account Health gave moderation, compliance, and support a shared view, so what happened internally was always reflected consistently in the creator experience.

- Log User Warnings (creator-visible)

- Use Admin Notes for investigations, fraud, AML, or legal context

- Access full context directly from Account Health

- No longer act as the “translator” between systems

Three choices that moved the numbers.

This project required making deliberate trade-offs between transparency, compliance, and operational scalability.

Replace strikes with warnings

“Strikes” were perceived as opaque and punitive. Warnings allowed clearer escalation and education.

Separate User Warnings and Admin Notes

Legal and investigative information should not be user-visible. Transparency must still be controlled and intentional.

A centralized Account Health view

Inline indicators alone lacked context and history. Creators needed to understand patterns, not incidents.

A platform-level capability, not a feature toggle.

The system launched as a platform-level capability. It introduced creator-facing Account Health and Warnings views, internal moderation tooling aligned with compliance rules, and a shared mental model across Product, Trust & Safety, and Support.